.png)

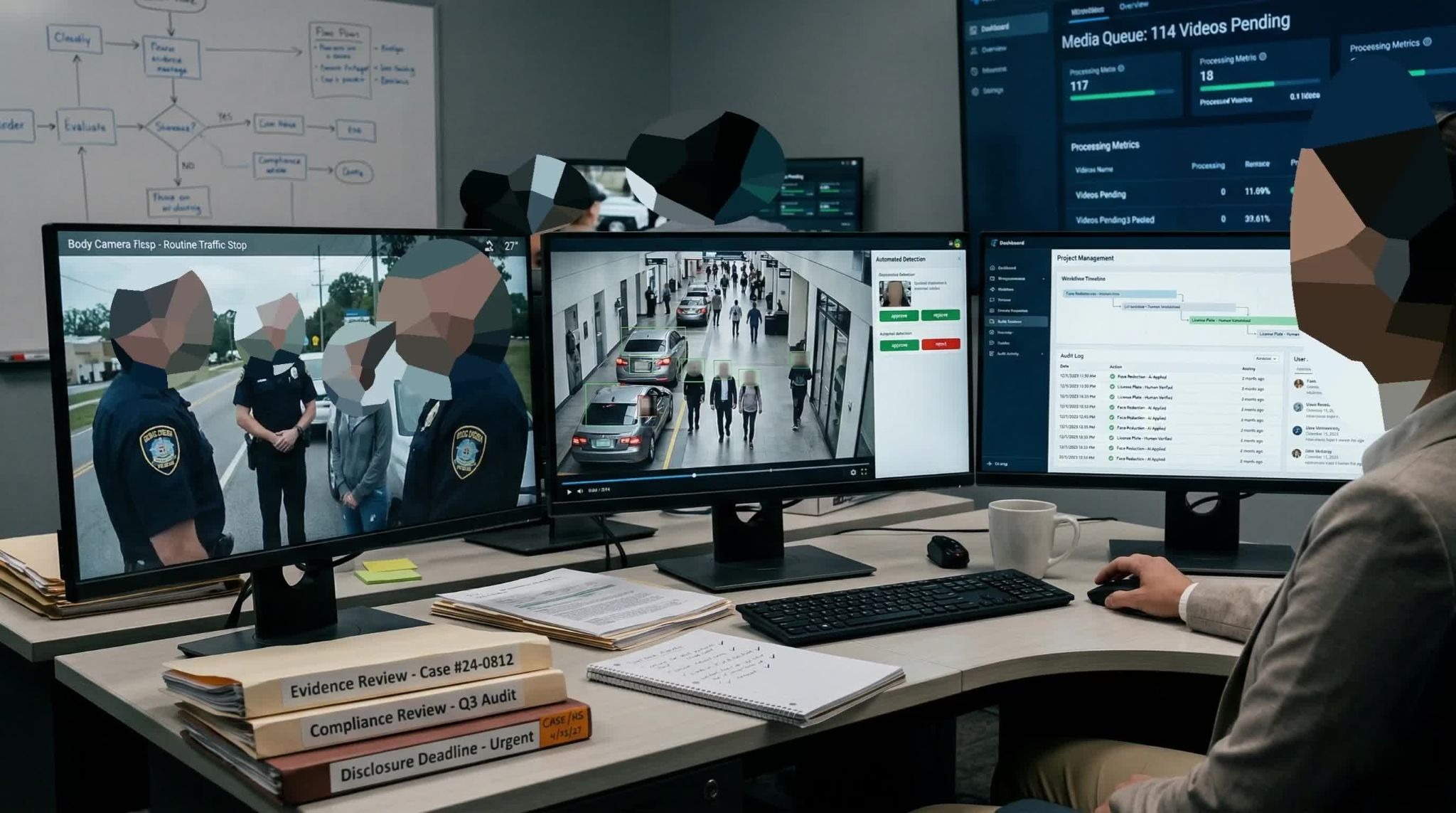

Choosing redaction software requires evaluating detection accuracy, workflow control, deployment flexibility, and compliance alignment with frameworks like FOIA, CJIS, HIPAA, and GDPR. Sighthound Redactor detects, tracks, and redacts heads, people, license plates, vehicles, IDs, screens, and documents across video, image, and audio, with options to blur visuals and mute or scramble sound. It supports law enforcement, legal teams, and enterprises that need reliable, scalable redaction without exposing sensitive data.

If you are comparing redaction software, you are probably trying to solve two problems at once, move faster through growing media backlogs and reduce the risk of a privacy mistake that becomes a legal or reputational issue.

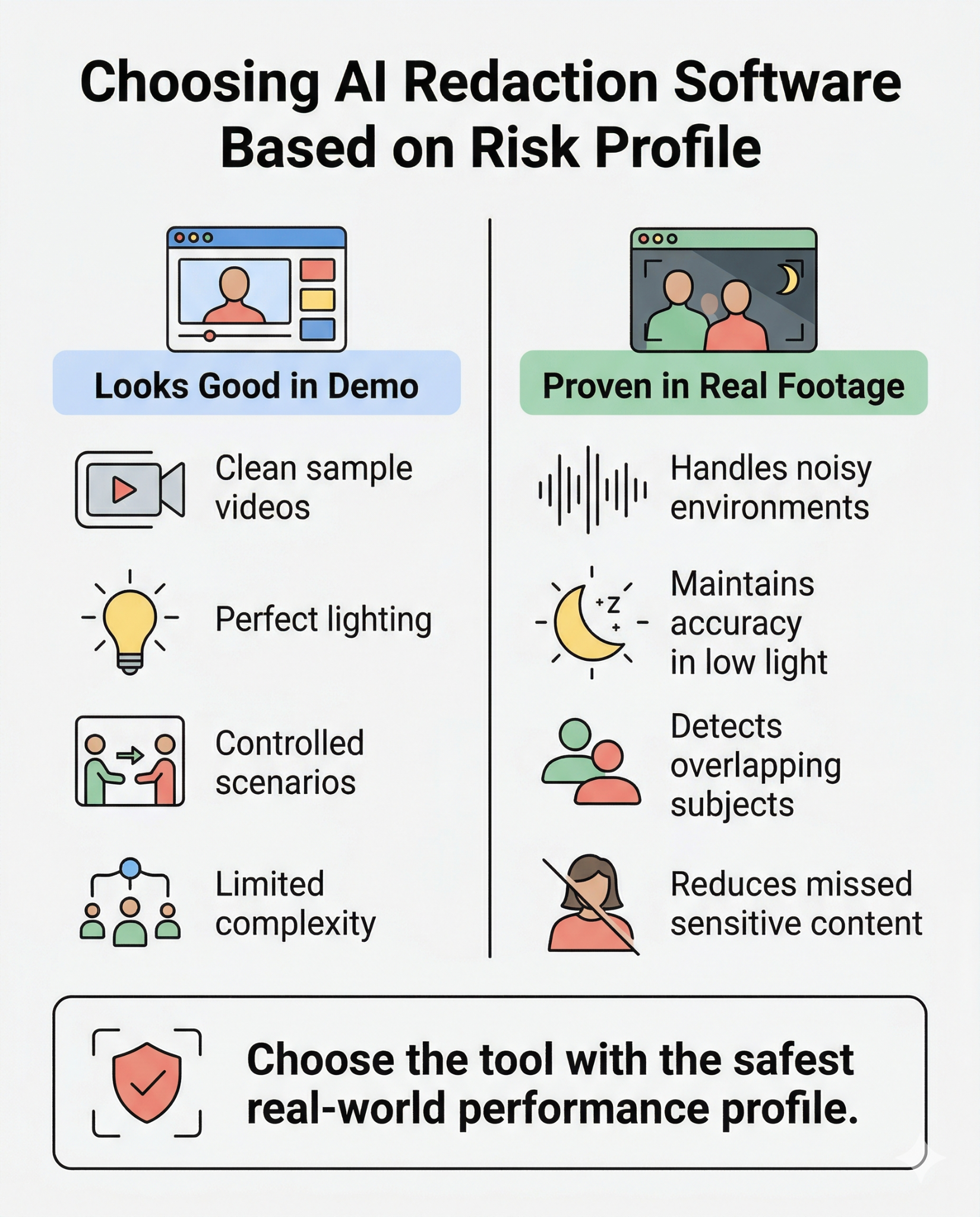

That is exactly where most teams get stuck. Vendor demos make many tools look similar, especially when every product claims automation, AI detection, and fast exports. But real-world outcomes depend less on feature lists and more on how a platform performs under your actual workload: noisy bodycam footage, mixed media formats, tight disclosure timelines, and cross-team approvals.

This guide is built for public records teams, law enforcement, legal departments, healthcare organizations, and compliance teams evaluating video redaction software for operational use. Instead of comparing marketing language, use these five questions to evaluate what matters most:

Whenever you are evaluating automated video redaction solutions, these questions give stakeholders a shared framework for selecting redaction software that is both efficient and defensible.

Automation is useful only when it reduces repetitive work without weakening governance. In practice, that means separating three layers of capability:

Many teams over-index on detection and miss the workflow layer. A tool may detect objects well but still force analysts into manual status tracking, ad hoc handoffs, and inconsistent approvals. That creates bottlenecks even when model quality is strong.

Strong automated redaction should make the analyst faster, not less accountable. You want explicit human checkpoints for high-risk disclosures, plus role-based permissions that prevent unauthorized exports.

What to test during pilots

Decision signal

If automation reduces cycle time but increases QA rework or approval confusion, the workflow design is not mature enough. The right redaction software improves speed and control at the same time.

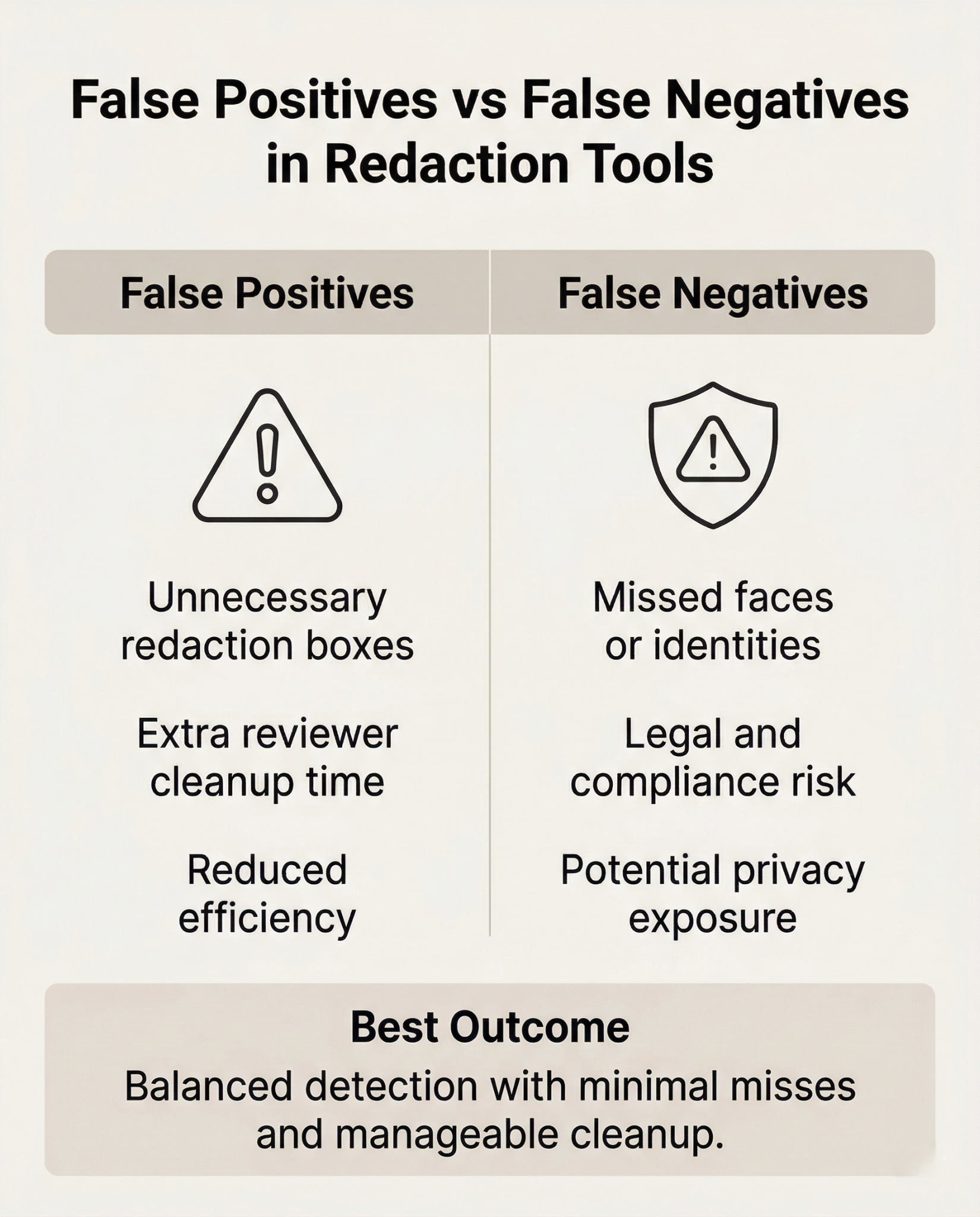

This is the make-or-break question for AI redaction tools. False negatives can expose sensitive information. False positives can overwhelm reviewers and erase efficiency gains. You need both metrics, not one.

Do not rely on curated samples. Build a representative evaluation set that mirrors your evidence reality:

When teams evaluate video redaction software, they often discover that top-performing demo tools diverge quickly on low-light or unstable footage. That gap directly affects disclosure safety and staffing load.

How to score detection quality

Decision signal

Choose the platform with the best risk-adjusted profile, not the prettiest demo. A tool with slightly higher reviewer cleanup may still be safer if it materially reduces missed PII-sensitive content.

A common failure in video evidence redaction is continuity, not initial detection. A mask appears in one frame, drops during motion or occlusion, then reappears. That one-frame exposure can still create a compliance incident.

Reliable tracking is critical when footage includes:

If tracking breaks frequently, analysts return to frame-by-frame correction. Throughput drops, fatigue rises, and error risk increases.

Continuity checks to include in a pilot

Decision signal

For high-stakes disclosure workflows, continuity performance often matters more than first-frame detection speed. The best redaction software keeps protections stable throughout the clip, even when the footage is messy.

A visually correct output is only part of defensible redaction. You also need to process evidence that shows who did what, when, and under which policy.

For privacy compliance redaction, your system should capture:

This matters in FOIA challenges, litigation support, internal investigations, and regulatory review. If an output is questioned months later, your team should be able to reconstruct the complete decision trail without guesswork.

Audit-readiness checks

Decision signal

If logs are shallow, hard to export, or disconnected from version history, treat that as a material risk. Redaction software should support legal defensibility, not just visual editing.

A platform can look excellent in a small pilot and still fail in production when the

data request volume spikes. Scalability is operational, not just computational.

Test whether the system supports:

This is especially important when teams handle mixed disclosure workloads at once. Fragmented toolchains can create inconsistent outputs, duplicated effort, and higher training overhead.

If your environment includes bodycam, interview audio, and surveillance footage, unified processing matters. A single workflow for video evidence redaction and related media is usually easier to govern than separate specialized tools.

Scale validation metrics

Decision signal

The right redaction software stays predictable under pressure. It should help teams meet statutory timelines without trading away accuracy or compliance controls.

Evaluate for Robustness

Choosing redaction software is a risk-management decision as much as a productivity decision. The strongest evaluations focus on five outcomes: trustworthy automation, real-world detection accuracy, frame-level continuity, defensible audit records, and reliable scaling under load.

Run your pilot with representative media, stress scenarios, and measurable pass/fail criteria. Then compare Sighthound Redactor and other vendors against the same scorecard. That keeps the process objective and helps legal, compliance, and operations teams align on a platform they can trust in production.

Those who choose AI redaction will secure efficiency, compliance, and public trust.

Want to learn more about AI-powered redaction & digital evidence compliance? Try Sighthound Redactor today.

Want more insights? Read our AI-powered redaction best practices.Need a live demo? Schedule a Redactor demo now.

Published on: